ChatGPT, Gemini & HIPAA: The Complete AI Security Guide for Birmingham Healthcare Practices

Executive Summary

Free ChatGPT and consumer Gemini violate HIPAA when used with patient data — creating immediate, reportable breaches with fines reaching $2.19M annually. HIPAA-eligible alternatives exist (ChatGPT for Healthcare, Google Workspace Gemini Enterprise), but require far more than a software subscription: a signed BAA, AES-256 encryption, MFA, audit logging, staff training, and governance aligned to NIST AI RMF 1.0. AllTech IT Solutions shows Birmingham healthcare practices exactly what compliance requires — and what an MSP provides that a vendor subscription cannot.

The Short Answer — Read This First

If your Birmingham healthcare practice is using the free version of ChatGPT or the consumer version of Google Gemini in any workflow that touches patient data, you are likely in violation of HIPAA right now.

Not a gray area. Not a technicality. An actual, enforceable, reportable breach — because no Business Associate Agreement (BAA) exists between your organization and OpenAI or Google for those consumer-tier products.

Key Takeaway

The breach occurs at the moment ePHI enters the prompt. It does not matter if nothing is stolen. Transmission without a BAA is a HIPAA violation under the Privacy Rule.

The good news: a compliant path forward genuinely exists. OpenAI offers ChatGPT for Healthcare, an enterprise-grade, HIPAA-eligible product with a BAA. Google offers Gemini in Google Workspace(enterprise tier) with BAA coverage. Both can be used legally with patient data — but only when properly procured, configured, and governed.

This guide explains exactly where the line is, what compliance actually requires, and why a software subscription alone doesn't get you across it. AllTech IT Solutions has protected Birmingham healthcare organizations since 2004. We'll be direct with you about every piece of this.

Why This Problem Exists — and Why It's Spreading Fast

AI productivity tools arrived faster than most healthcare compliance programs could respond.

A front-desk coordinator discovers that ChatGPT drafts patient follow-up letters in 30 seconds. A billing manager starts pasting insurance denial patterns into Gemini to find the common thread. A clinical staffer copies patient notes into an AI tool because the care summary was overdue and the day had gone sideways.

Nobody raises a flag. These tools are free, intuitive, and widely used. The problem is invisible — until it isn't.

Electronic Protected Health Information (ePHI): The HIPAA Definition That Changes Everything

ePHI is any individually identifiable health information created, stored, transmitted, or received electronically — including patient names, diagnoses, dates of service, insurance information, clinical notes, or any data that could identify a patient. HIPAA's Security Rule governs all ePHI. Entering ePHI into a non-covered AI tool is a HIPAA violation at the moment of transmission, regardless of downstream use. (HHS/OCR)

Shadow AI: The Invisible Risk

Unauthorized AI tools used by employees — often on personal devices — were involved in 20% of data breaches in 2025. Without monitoring, detection depends entirely on every employee making the right call in the middle of every busy workday. That is not a compliance strategy. (IBM Security, 2025)

What HIPAA Actually Requires for AI Tools

The Business Associate Agreement (BAA): The Non-Negotiable First Step

HIPAA's Privacy and Security Rules require that any vendor who creates, receives, maintains, or transmits ePHI on behalf of a covered entity must sign a Business Associate Agreement (BAA). The BAA defines the vendor's obligations for protecting that data — breach notification responsibilities, permitted uses, subcontractor obligations, and security standards.

No BAA = no HIPAA coverage. It doesn't matter how reputable the vendor is.

This rule has not changed with the arrival of AI. It applies to every vendor whose platform touches ePHI — including AI tools. Without a signed BAA:

- Data your staff enters into the AI tool is unprotected under HIPAA

- The transmission itself constitutes a breach under the HIPAA Privacy Rule

- Your organization bears full liability for the violation

- HHS/OCR can investigate and fine you — even if nothing is stolen

The Proposed HIPAA Security Rule Update (2026)

In December 2024, HHS/OCR published a Notice of Proposed Rulemaking (NPRM) proposing the first significant update to the HIPAA Security Rule since 2013. The NPRM proposes to reclassify several safeguards from "addressable" (you can document a reasonable alternative) to "required" (no alternative is accepted). Proposed required safeguards include:

- Encryption of ePHI at rest and in transit

- Multi-factor authentication (MFA) for all systems accessing ePHI

- Audit logging of all ePHI access

- Asset inventories for all systems that create, receive, maintain, or transmit ePHI

- Formal incident response plans

⚠️ Current status (April 2026): The Trump administration is reviewing the proposed rule. The final rule may differ from the proposed version or be delayed. However, OCR's enforcement of existing HIPAA Security Rule requirements continues actively — OCR issued $8.33 million in fines in 2025, with an average penalty of $396,670. Practices that haven't implemented encryption, MFA, and audit logging face enforcement risk under current rules, regardless of the NPRM's final status. (HHS/OCR; HIPAA Journal, 2026)

Alabama's Stricter Notification Requirement

Alabama's Data Breach Notification Act (enacted 2018) requires notification of affected individuals and the Alabama Attorney General within 45 days of determining a breach has occurred — 15 days faster than HIPAA's 60-day federal window. Violations carry penalties up to $5,000 per day.

Birmingham healthcare organizations are operating under both federal HIPAA rules and Alabama state law. Both apply simultaneously.

ChatGPT in Healthcare: Which Version Is Legal?

OpenAI offers multiple product tiers. Only two are HIPAA-eligible. The difference is critical.

Consumer ChatGPT (Free, Plus, Pro Plans) — Do Not Use with ePHI

Using any consumer ChatGPT plan (Free, Plus, or Pro) with patient data is a HIPAA violation at the moment of input — before any downstream use occurs. OpenAI's Terms of Service explicitly state that standard consumer plans are not designed for HIPAA-covered use cases. (OpenAI, Enterprise Privacy, January 8, 2026)

Reality Check

Using free ChatGPT with patient data is like texting a patient's diagnosis to a random phone number because the service is convenient and free. The outcome — unprotected transmission of ePHI — is the same.

ChatGPT for Healthcare & ChatGPT Enterprise — HIPAA-Eligible (with proper setup)

OpenAI offers two HIPAA-eligible product tiers:

- ChatGPT for Healthcare: Purpose-built enterprise workspace with clinical workflow features, covered under OpenAI's BAA, designed specifically for healthcare organizations

- ChatGPT Enterprise: General enterprise tier with custom BAA terms; HIPAA-eligible when procured through sales-managed contract with healthcare-specific configurations

Important caveats: The BAA covers OpenAI's obligations — not yours. What your employees type into prompts, how you've configured access controls, and whether your staff understands what ePHI should or shouldn't enter a prompt are entirely your responsibility. The BAA is the starting line, not the finish line.

ChatGPT Business is not eligible for a BAA. Only sales-managed Enterprise accounts and ChatGPT for Healthcare qualify. (OpenAI, Enterprise Privacy)

Estimated cost: ChatGPT Enterprise requires a custom sales contract. Team-tier pricing is approximately $25–30 per user/month publicly; Enterprise pricing is negotiated based on organization size and deployment needs. Contact OpenAI's sales team for healthcare-specific pricing.

Google Gemini in Healthcare: Which Version Is Legal?

The same consumer/enterprise distinction applies to Google's AI products.

Consumer Gemini (gemini.google.com with a personal Google account) — Do Not Use with ePHI

The consumer version of Gemini carries no BAA, no HIPAA protections, and no data governance controls appropriate for clinical environments. Using it with patient data is a HIPAA violation.

Gemini in Google Workspace (Enterprise Tier) — HIPAA-Eligible (with proper setup)

Google Workspace with Gemini at the enterprise tier is covered under Google's Business Associate Agreement, making it HIPAA-eligible for organizations with the appropriate Workspace subscription and BAA in place. (Google Workspace, July 16, 2025)

Google Workspace enterprise Gemini includes:

- BAA coverage (for covered services — confirm specific features with Google)

- Data Loss Prevention (DLP) policies to restrict Gemini's access to sensitive files

- Client-side encryption (CSE) available for the highest protection level — when CSE is enabled, encrypted data is inaccessible to Google or any AI assistant, including Gemini

- Information Rights Management (IRM) controls to prevent Gemini from retrieving protected files

- Healthcare Data Security Controls, including data classification labels and context-aware access

- Compliance Audit Logging

Important caveats: Not all Gemini features are covered under the BAA. The organization must confirm which specific features are BAA-eligible, implement access controls, and train staff. Consumer or experimental Gemini versions (outside enterprise Workspace) are never appropriate for ePHI. (Nightfall AI; Accountable HQ, 2025)

What "Actually Compliant" AI Use Looks Like: Technical Requirements

Meeting HIPAA's AI security requirements involves more than picking the right product tier. Here is what compliant AI deployment in a healthcare setting requires, based on current HHS/OCR guidance and NIST AI RMF 1.0:

Required Technical Safeguards

- AES-256 Encryption at Rest: All stored ePHI must be encrypted using Advanced Encryption Standard (AES) with a 256-bit key, making data unreadable without decryption keys

- TLS 1.2+ Encryption in Transit: All ePHI moved across networks must use Transport Layer Security (TLS) version 1.2 or higher, protecting data during transmission

- Multi-Factor Authentication (MFA): Every account with access to ePHI must require MFA — at minimum, something you know (password) plus something you have (authenticator app, security key, or phone) or something you are (biometric)

- Role-Based Access Controls (RBAC): AI platform access must be limited by job function — billing staff cannot access clinical notes, clinical staff cannot access payroll, etc. The principle of least privilege

- Audit Logging: Every interaction involving ePHI must be logged with user identification, timestamp, action taken, and data accessed. These logs must be retained and reviewed

- Formal HIPAA Security Risk Analysis: Before deploying any AI tool that touches ePHI, conduct a documented risk assessment identifying threats, vulnerabilities, impact, and mitigating controls

(HHS/OCR NPRM, January 6, 2025; NIST AI RMF 1.0, January 26, 2023)

NIST AI Risk Management Framework (AI RMF 1.0): Your Governance Foundation

The NIST AI Risk Management Framework (AI RMF 1.0) is a voluntary framework published by the National Institute of Standards and Technology on January 26, 2023, designed to help organizations identify, assess, and manage risks from AI systems. It operates through four core functions: GOVERN, MAP, MEASURE, and MANAGE. In July 2024, NIST released AI RMF 600-1, a supplemental Generative AI Profile, which addresses risks specific to large language models (LLMs) like ChatGPT and Gemini.

Healthcare organizations deploying AI tools should use the NIST AI RMF as their governance documentation framework. If OCR investigates, NIST AI RMF documentation demonstrates that your organization made structured, evidence-based decisions about AI risk — not that someone started pasting patient data into a chatbot and hoped for the best.

The four functions applied to healthcare AI:

- GOVERN: Establish AI policies, acceptable-use standards, and accountability structures before deployment. Designate who owns AI governance decisions.

- MAP: Identify which AI tools process ePHI, which workflows they touch, and what the risk exposure is for each.

- MEASURE: Assess and score risk levels. Test whether controls are working. Include AI tools in your HIPAA Security Risk Analysis.

- MANAGE: Implement controls, monitor AI behavior, respond to incidents, and maintain ongoing oversight.

For independent practices and small group providers, no one hands you this framework. The responsibility is entirely yours — unless you partner with a qualified MSP.

The Shadow AI Problem: Personal Devices and Unauthorized Tools

This is the hardest part of the AI compliance challenge, and it deserves plain language.

An employee who opens a free ChatGPT tab on their personal phone during a busy workday and pastes patient information into it creates a HIPAA exposure that is:

- Invisible — it doesn't appear in your network logs unless you have cloud application behavior monitoring

- Immediate — the violation occurs at the moment of transmission

- Nearly irreversible — once ePHI enters a non-covered platform, you cannot un-transmit it

IBM's 2025 data shows that shadow AI (unauthorized AI tools) was involved in 20% of organizational breaches. Without monitoring, detection depends entirely on every employee making the right call, in the middle of every busy, stressful workday. That is not a compliance strategy.

Preventing it requires two layers working together:

- Technical controls: Cloud application behavior monitoring that detects ePHI leaving to unauthorized platforms; network-level blocking of non-approved AI services; device management policies that extend to personal devices accessing practice systems

- Organizational controls: A written AI acceptable-use policy; staff training that makes the consequences genuinely clear; onboarding processes that include AI tool authorization procedures

Without both layers, you're relying on hope. That's not a defensible compliance posture.

Why a Software Subscription Is Not Enough: The MSP Case

Even if your practice subscribes to ChatGPT for Healthcare today and signs the BAA by end of week — consider whether you can confidently confirm all of the following:

- ☐ The BAA has been reviewed for completeness and enforceability by someone qualified to evaluate it — not just filed away

- ☐ MFA is deployed and actively enforced on every single account with AI access, including shared accounts

- ☐ Audit logs are being captured, retained at the appropriate period, and actually reviewed by a qualified person

- ☐ Staff can articulate the difference between what is safe to enter into an AI prompt and what isn't

- ☐ Your broader IT environment is secure enough that a breach via a different vector wouldn't expose AI-processed data

- ☐ You have a documented, tested incident response plan for an AI-related breach

- ☐ Your HIPAA Security Risk Analysis has been updated to include your AI tools

- ☐ You have an asset inventory that includes all systems touching ePHI

A software subscription covers your relationship with one vendor. A qualified MSP addresses the full framework.

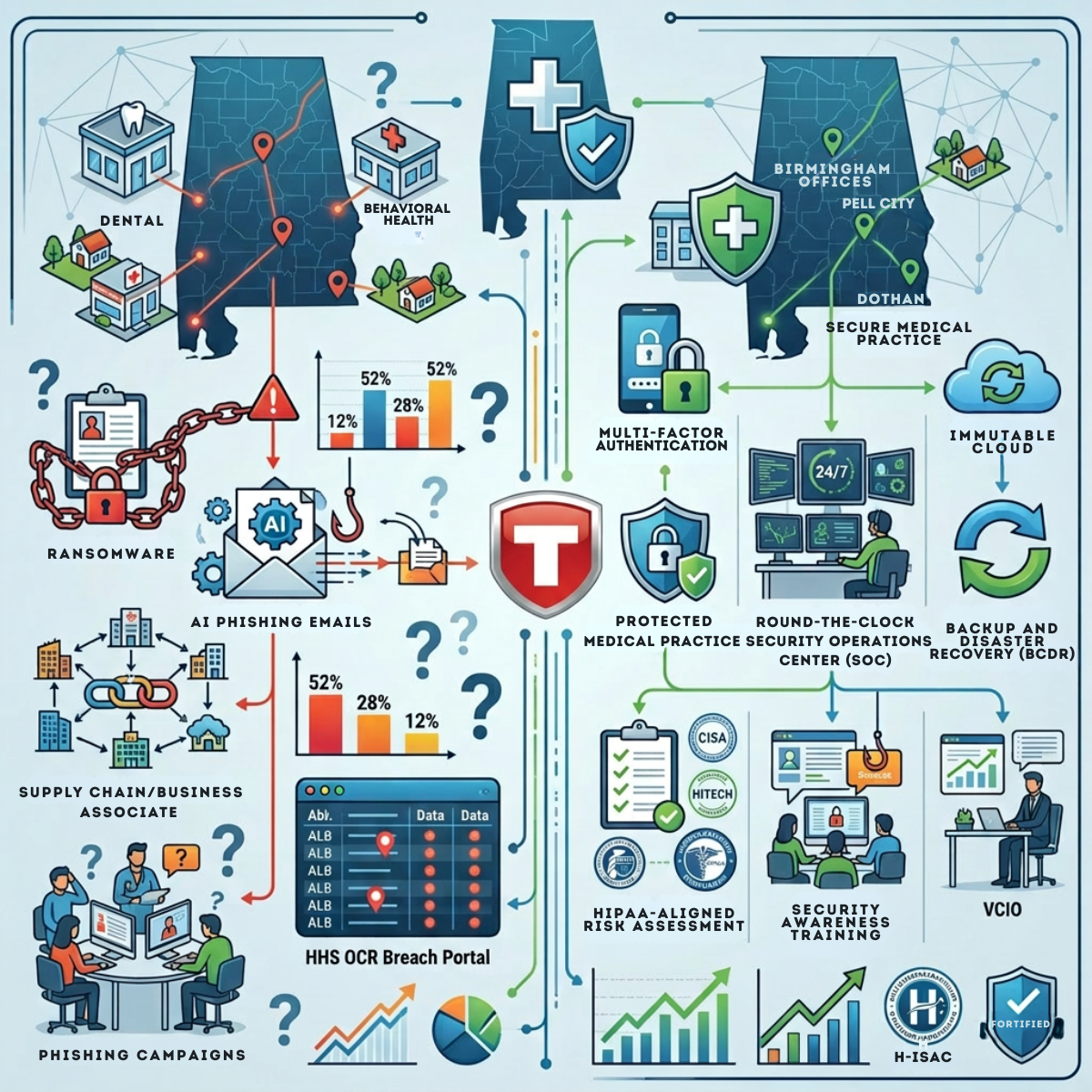

How AllTech IT Solutions Protects Birmingham Healthcare Practices

AllTech IT Solutions has been protecting Alabama healthcare organizations since 2004 — more than two decades operating in the exact regulatory environment Birmingham practices face today.

Why Choose AllTech?

Credentials:

- Alabama's #1 ranked Computer Security Service (Best of BusinessRate, 2025, independently verified via Google Reviews)

- #2,582 on the Inc. 5000 America's fastest-growing private companies (2025), reflecting 163% revenue growth over three years

- 99.3 / 100 CSAT score from 148 verified reviews (ConnectWise SmileBack platform)

- 1,000+ Alabama businesses served

AllTech's Healthcare AI Security Services

1. Cybersecurity Risk Assessment (Free for Qualified Practices)

AllTech audits your full IT environment — scanning for vulnerabilities, reviewing access controls, and mapping your gaps against HIPAA, NIST CSF 2.0, and NIST AI RMF 1.0. The deliverable is an executive-level risk report with a prioritized remediation roadmap. This is the logical first step for any Birmingham practice unsure whether their AI tool usage is compliant.

What's included:

- Full network and endpoint vulnerability scan

- Review of security policies, access controls, and user practices

- Gap analysis against HIPAA, NIST, and AI-era requirements

- Risk scoring and executive risk profile report

- Prioritized remediation roadmap with cost estimates

2. MFA Deployment and Enforcement

AllTech deploys and manages multi-factor authentication across your entire organization — including AI platforms, EHR/EMR systems, and cloud applications — with role-based access policies, device trust enforcement, and ongoing compliance reporting. Not set-up-and-forgotten. Actively managed.

3. Cloud Application Behavior Monitoring

Continuous monitoring of user activity across Microsoft 365, Google Workspace, and cloud SaaS platforms. Detects and automatically responds to risky behaviors: mass file downloads, unusual login patterns, sensitive content shared outside the organization, and unauthorized AI platform access.

Example automated rules:

- If a file is shared with an unapproved domain → Revoke sharing, notify IT

- If 100+ files are downloaded in 10 minutes → Lock account, alert security

- If a user accesses an unauthorized AI platform → Alert and flag for review

This directly addresses the shadow AI risk that software subscriptions cannot see.

4. SIEM-Based Audit Logging and Compliance Reporting

AllTech's Security Information and Event Management (SIEM) platform captures the complete audit trails required under HIPAA Security Rule and NIST CSF 2.0. Every AI query involving patient data is logged. If OCR investigates, you have documented evidence. That documentation is the difference between a manageable situation and a catastrophic one.

5. Staff Security Awareness Training

Monthly interactive cybersecurity training with realistic phishing simulations and industry-specific paths for HIPAA compliance. Includes documentation of training completion for audit purposes. This directly addresses the human behavior problem — where the IBM 2025 data confirms most breaches actually begin.

6. Incident Response Planning and Execution

AllTech builds a custom Incident Response Handbook for each client before anything goes wrong. If a breach occurs — AI-related or otherwise — AllTech provides structured containment, forensic analysis, regulatory communication guidance (including HHS/OCR and Alabama AG notification), and post-incident hardening.

7. Virtual CIO (vCIO) Services for Healthcare AI Strategy

AllTech's vCIO service provides healthcare practices with executive-level IT strategy — including AI governance policy development, vendor evaluation (BAA review, security assessment), and multi-year technology roadmapping aligned to HIPAA and NIST requirements. SMBs get CIO-level expertise without a CIO-level salary.

Common Mistakes Birmingham Healthcare Practices Make with AI Tools

1. Assuming "Enterprise" Means Compliant

Subscribing to ChatGPT Enterprise is not the same as a HIPAA-compliant deployment. The BAA covers OpenAI's obligations. Everything on your side — access controls, training, audit logs — is still your responsibility.

2. Using ChatGPT Business and Assuming BAA Coverage Exists

OpenAI explicitly does not offer BAAs for ChatGPT Business. Only sales-managed Enterprise and ChatGPT for Healthcare accounts qualify. (OpenAI)

3. Overlooking Personal Device Access

Employees using personal phones or laptops to access AI tools create exposures completely invisible to your network controls — unless you have cloud behavior monitoring in place.

4. Filing the BAA Without Reviewing It

A BAA that doesn't cover the specific features or data types you're using provides inadequate protection. BAAs need legal and compliance review, not just filing.

5. Skipping the Risk Assessment

HIPAA's Security Rule requires a risk analysis any time new technology is introduced that might affect ePHI. Deploying AI without a documented risk assessment is itself a HIPAA violation — separate from any data exposure.

6. Treating Compliance as a One-Time Event

AI tools update frequently. OpenAI and Google change their terms, features, and covered endpoints regularly. Your compliance posture requires ongoing monitoring, not a single audit.

7. Ignoring Alabama's 45-Day Clock

Healthcare organizations in Alabama face a 45-day breach notification deadline under state law — stricter than HIPAA's 60-day federal window. Many practices are unaware of the state-level obligation.

Frequently Asked Questions

Q: Is free ChatGPT HIPAA compliant?

A: No. Free ChatGPT (and ChatGPT Plus and Pro) is not HIPAA compliant. OpenAI does not offer a Business Associate Agreement (BAA) for consumer-tier accounts, and default settings allow data to be used for model training. Using any of these plans with electronic Protected Health Information (ePHI) constitutes a reportable HIPAA breach at the moment of transmission. (OpenAI, Enterprise Privacy, January 2026)

Q: Which version of ChatGPT can healthcare practices legally use with patient data?

A: Only ChatGPT for Healthcare and ChatGPT Enterprise (sales-managed accounts) are HIPAA-eligible, because only these tiers allow organizations to execute a Business Associate Agreement (BAA) with OpenAI. ChatGPT Business is explicitly excluded from BAA eligibility. (OpenAI, Enterprise Privacy, January 2026)

Q: Is Google Gemini HIPAA compliant?

A: Consumer Google Gemini (accessed via gemini.google.com with a personal Google account) is not HIPAA compliant. However, Gemini in Google Workspace at the enterprise tier is covered under Google's BAA, making it HIPAA-eligible when properly configured with healthcare data security controls, access restrictions, and staff training. (Google Workspace, July 2025; Nightfall AI, 2025)

Q: What is a Business Associate Agreement (BAA) and why does it matter for AI tools?

A: A Business Associate Agreement (BAA) is a legally required contract under HIPAA between a healthcare covered entity and any vendor that creates, receives, maintains, or transmits electronic Protected Health Information (ePHI) on its behalf. Without a signed BAA, using an AI tool with patient data is a HIPAA violation — regardless of whether data is actually misused. (HHS/OCR)

Q: What are the HIPAA fines for using non-compliant AI tools with patient data?

A: HIPAA civil monetary penalties for willful neglect reach up to $73,011 per violation with an annual cap of $2,190,294 per violation category(effective January 28, 2026, per HHS inflation adjustment). The average U.S. healthcare data breach costs $7.42 million(IBM, 2025), with legal, regulatory, remediation, and reputational costs factored in. (ProspyrMed, 2026; IBM Cost of a Data Breach Report 2025)

Q: Does Alabama have stricter data breach notification rules than HIPAA?

A: Yes. Alabama's Data Breach Notification Act requires organizations to notify affected individuals and the Alabama Attorney General within 45 days of determining a breach has occurred — 15 days faster than HIPAA's federal 60-day notification window. Late notification carries penalties up to $5,000 per day after the deadline. (Alabama Data Breach Notification Act, 2018)

Q: What technical controls are required for HIPAA-compliant AI use in healthcare?

A: HIPAA-compliant AI deployment in healthcare requires, at minimum: a signed BAA with the AI vendor; AES-256 encryption at rest; TLS 1.2+ encryption in transit; multi-factor authentication (MFA) on all accounts accessing ePHI; role-based access controls; audit logging for all AI interactions involving patient data; and a completed HIPAA Security Risk Analysis before deployment. (HHS/OCR; NIST AI RMF 1.0)

Q: Does signing a BAA with OpenAI or Google make my AI deployment fully HIPAA compliant?

A: No. A signed BAA covers the vendor's obligations, not yours. Compliance on your side still requires proper configuration of access controls, MFA enforcement, audit logging, staff training, a completed HIPAA Security Risk Analysis, and an AI acceptable-use policy. The BAA is the starting line, not the finish line.

Q: Can employees use AI tools on personal phones for work in a healthcare setting?

A: Not with patient data. An employee who enters ePHI into a consumer AI tool on a personal device creates a HIPAA breach that is invisible to standard network monitoring and nearly impossible to remediate after the fact. Preventing it requires both technical controls (cloud application behavior monitoring, device management policies) and organizational controls (written AI acceptable-use policies and staff training). (HHS/OCR; IBM, 2025)

Q: Is ChatGPT Business eligible for a BAA?

A: No. OpenAI explicitly states that ChatGPT Business is not eligible for a Business Associate Agreement. Only sales-managed ChatGPT Enterprise accounts and ChatGPT for Healthcare customers can execute a BAA with OpenAI. (OpenAI, Enterprise Privacy, January 2026)

Key Takeaways

- Free ChatGPT and consumer Gemini are not HIPAA compliant. No BAA = immediate breach upon transmission of ePHI.

- Only ChatGPT for Healthcare, ChatGPT Enterprise, and Google Workspace Gemini (enterprise tier) are HIPAA-eligible — and only with a signed BAA.

- A BAA covers vendor obligations only. Your compliance requires encryption, MFA, audit logging, staff training, risk assessment, and incident response planning.

- Shadow AI — unauthorized tools on personal devices — was involved in 20% of breaches in 2025. Monitoring plus policy is required.

- HIPAA fines now reach $2.19M annually per violation category. Alabama's 45-day breach notification deadline is stricter than HIPAA's federal 60-day window.

- An MSP like AllTech addresses the full compliance stack. A software subscription covers only the vendor relationship.

- NIST AI RMF 1.0 documentation is your best defense if OCR investigates. Structure your governance to align.

Next Step

Get Your Free Cybersecurity Risk Assessment Today

AllTech IT Solutions offers a free Cybersecurity Risk Assessment for Birmingham-area healthcare organizations. We'll evaluate your full IT environment against HIPAA, NIST CSF 2.0, and NIST AI RMF 1.0 — and deliver an executive-level report with a prioritized remediation roadmap. No obligation. Real data.